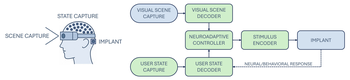

Rather than pursuing a (degraded) imitation of natural sight, bionic vision might be better understood as a form of neuroadaptive XR: a perceptual interface that forgoes visual fidelity in favor of delivering sparse, personalized cues shaped (at its full potential) by user intent, behavioral context, and cognitive state.

Topic: Visual Prosthesis

Researchers Interested in This Topic

Ruyi Cao

Honors Student

Yuchen Hou

PhD Candidate

Lucas Nadolskis

PhD Student

Galen Pogoncheff

PhD Candidate

Lily M. Turkstra

PhD Candidate

Apurv Varshney

PhD Student

Research Projects

Bionic vision as neuroadaptive XR: Closed-loop perceptual interfaces for neurotechnology

Michael Beyeler 2025 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct)

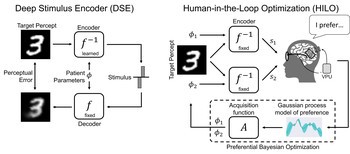

Deep learning-based control of electrically evoked activity in human visual cortex

We developed a data-driven neural control framework for a visual cortical prosthesis in a blind human, showing that deep learning can synthesize efficient, stable stimulation patterns that reliably evoke percepts and outperform conventional calibration methods.

Pehuén Moure, Jacob Granley, Fabrizio Grani, Leili Soo, Antonio Lozano, Rocio López-Peco, Adrián Villamarin-Ortiz, Cristina Soto-Sánchez, Shih-Chii Liu, Michael Beyeler, Eduardo Fernández bioRxiv

(Note: PM, JG, and FG are co-first authors. SL, MB, and EF are co-last authors.)

BionicVisionXR: An Open-Source Virtual Reality Toolbox for Bionic Vision

BionicVisionXR is an open-source virtual reality toolbox for simulated prosthetic vision that uses a psychophysically validated computational model to allow sighted participants to “see through the eyes” of a bionic eye recipient.

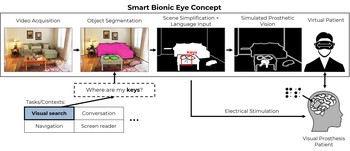

Towards a Smart Bionic Eye

Rather than aiming to one day restore natural vision, we might be better off thinking about how to create practical and useful artificial vision now.

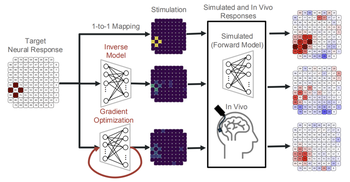

End-to-End Optimization of Bionic Vision

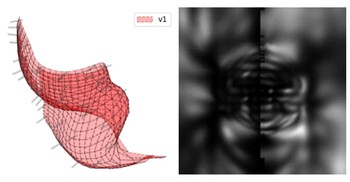

Rather than predicting perceptual distortions, one needs to solve the inverse problem: What is the best stimulus to generate a desired visual percept?

Predicting Visual Outcomes for Visual Prostheses

What do visual prosthesis users see, and why? Clinical studies have shown that the vision provided by current devices differs substantially from normal sight.

pulse2percept: A Python-Based Simulation Framework for Bionic Vision

pulse2percept is an open-source Python simulation framework used to predict the perceptual experience of retinal prosthesis patients across a wide range of implant configurations.