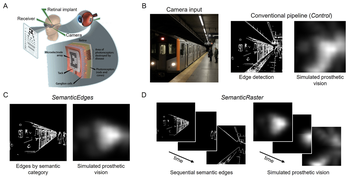

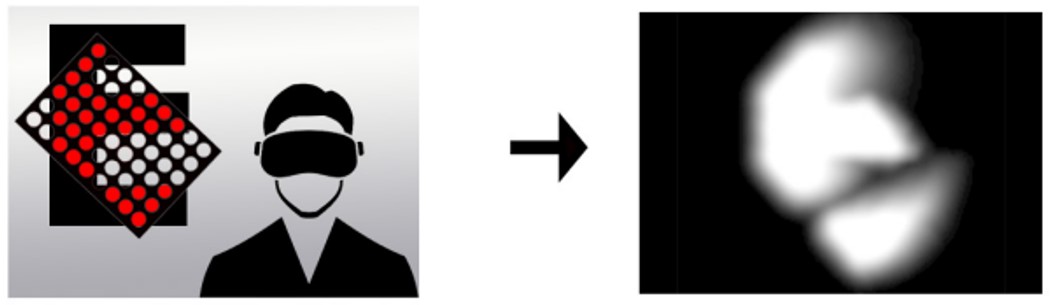

We compare two complementary approaches to semantic preprocessing in immersive virtual reality: SemanticEdges, which highlights all relevant objects at once, and SemanticRaster, which staggers object categories over time to reduce visual clutter.

BionicVisionXR: An Open-Source Virtual Reality Toolbox for Bionic Vision

A major outstanding challenge in the field of bionic vision is predicting what people “see” when they use their devices. The limited field of view of current devices necessitates head movements to scan the scene, which is difficult to simulate on a computer screen. In addition, many computational models of bionic vision lack biological realism.

To address these challenges, we present BionicVisionXR, an open-source virtual reality toolbox for simulated prosthetic vision that uses a psychophysically validated computational model to allow sighted participants to “see through the eyes” of a bionic eye user.

What would the world look like with a #BionicEye? Find out tonight (7-10pm) at #vss2023!

— Michael Beyeler (@ProfBeyeler) May 22, 2023

Demo 7, Island Ballroom:https://t.co/7SJMkOkEkM pic.twitter.com/73Vc125LSd

Project Lead:

PhD Student

Project Affiliate:

Research Assistant

Principal Investigator:

Associate Professor

Publications

Static or temporal? Semantic scene simplification to aid wayfinding in immersive simulations of bionic vision

Justin M. Kasowski, Apurv Varshney, Michael Beyeler 31st ACM Symposium on Virtual Reality Software and Technology (VRST) ‘25

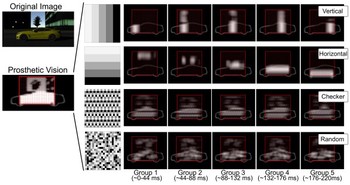

Simulated prosthetic vision confirms checkerboard as an effective raster pattern for epiretinal implants

Using an immersive VR system, we systematically evaluated two behavioral tasks under four raster patterns (horizontal, vertical, checkerboard, and random) and found checkerboard raster to be the most effective.

Justin M. Kasowski, Apurv Varshney, Roksana Sadeghi, Michael Beyeler Journal of Neural Engineering 22 046017

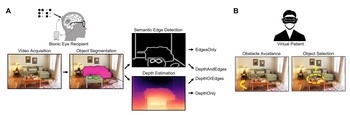

The relative importance of depth cues and semantic edges for indoor mobility using simulated prosthetic vision in immersive virtual reality

We used a neurobiologically inspired model of simulated prosthetic vision in an immersive virtual reality environment to test the relative importance of semantic edges and relative depth cues to support the ability to avoid obstacles and identify objects.

Alex Rasla, Michael Beyeler 28th ACM Symposium on Virtual Reality Software and Technology (VRST) ‘22

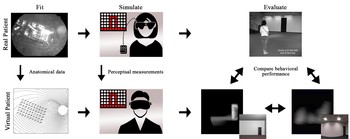

Immersive virtual reality simulations of bionic vision

We present VR-SPV, an open-source virtual reality toolbox for simulated prosthetic vision that uses a psychophysically validated computational model to allow sighted participants to ‘see through the eyes’ of a bionic eye user.

Justin Kasowski, Michael Beyeler ACM Augmented Humans (AHs) ‘22

Towards immersive virtual reality simulations of bionic vision

We propose to embed biologically realistic models of simulated prosthetic vision in immersive virtual reality so that sighted subjects can act as ‘virtual patients’ in real-world tasks.

Justin Kasowski, Nathan Wu, Michael Beyeler ACM Augmented Humans (AHs) ‘21