Rather than aiming to one day restore natural vision, we might be better off thinking about how to create practical and useful artificial vision now.

Apurv Varshney is a PhD student in Computer Science at UC Santa Barbara. He is interested in Mixed Reality (MR) and Human Computer Interaction. His research goal is to harness MR and visual prosthesis to enhance accessibility and navigational challenges faced by Blind people.

Outside of lab, he enjoys biking and playing tennis.

- 3205 BioEngineering

- apurv@ucsb.edu

Honors & Awards

- Outstanding TA Award, CS, UCSB (2025)

Education

-

PhD in Computer Science, 2028 (expected)

University of California, Santa Barbara

-

BTech (Bachelors of Technology) in Computer Science, 2020

Indian Institute of Technology (IIT), Goa

Project Lead

Assistive Technologies for People Who Are Blind

This research explores the integration of computer vision into various assistive devices, aiming to enhance urban navigation and environmental interaction for individuals who are blind or visually impaired.

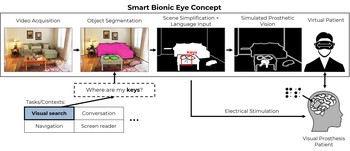

BionicVisionXR: An Open-Source Virtual Reality Toolbox for Bionic Vision

BionicVisionXR is an open-source virtual reality toolbox for simulated prosthetic vision that uses a psychophysically validated computational model to allow sighted participants to “see through the eyes” of a bionic eye recipient.

Project Affiliate

pulse2percept: A Python-Based Simulation Framework for Bionic Vision

pulse2percept is an open-source Python simulation framework used to predict the perceptual experience of retinal prosthesis patients across a wide range of implant configurations.

Publications

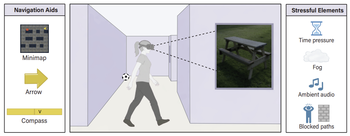

Actionable guidance outperforms map and compass cues in demanding immersive VR wayfinding

We compared three common guidance techniques for immersive VR wayfinding: a directional arrow, a minimap, and a compass.

Apurv Varshney, Lily M. Turkstra, Jiaxin Su, Mable Zhou, Scott T. Grafton, Barry Giesbrecht, Mary Hegarty, Michael Beyeler arXiv

(Note: AV and LMT contributed equally to this work.)

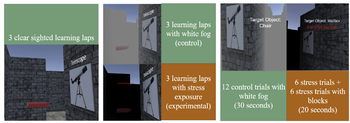

Adaptive training for navigation under stress: Impacts of stress exposure and spatial anxiety

We examine whether stress exposure during environmental learning fosters resilience to stress in subsequent navigation or impairs learning.

Mable Zhou, Apurv Varshney, Kayla P. Salcedo, Scott T. Grafton, Barry Giesbrecht, Michael Beyeler, Mary Hegarty PsyArxiv

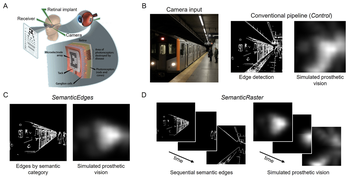

Static or temporal? Semantic scene simplification to aid wayfinding in immersive simulations of bionic vision

We compare two complementary approaches to semantic preprocessing in immersive virtual reality: SemanticEdges, which highlights all relevant objects at once, and SemanticRaster, which staggers object categories over time to reduce visual clutter.

Justin M. Kasowski, Apurv Varshney, Michael Beyeler 31st ACM Symposium on Virtual Reality Software and Technology (VRST) ‘25

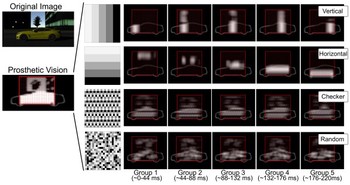

Simulated prosthetic vision confirms checkerboard as an effective raster pattern for epiretinal implants

Using an immersive VR system, we systematically evaluated two behavioral tasks under four raster patterns (horizontal, vertical, checkerboard, and random) and found checkerboard raster to be the most effective.

Justin M. Kasowski, Apurv Varshney, Roksana Sadeghi, Michael Beyeler Journal of Neural Engineering 22 046017

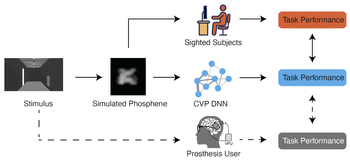

A deep learning framework for predicting functional visual performance in bionic eye users

We introduce a computational virtual patient (CVP) pipeline that integrates anatomically grounded phosphene simulation with task-optimized deep neural networks to forecast patient perceptual capabilities across diverse prosthetic designs and tasks.

Jonathan Skaza, Shravan Murlidaran, Apurv Varshney, Ziqi Wen, William Wang, Miguel P. Eckstein, Michael Beyeler bioRxiv

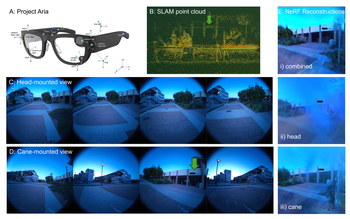

Beyond physical reach: Comparing head- and cane-mounted cameras for last-mile navigation by blind users

We evaluate head- and cane-mounted cameras for blind navigation and show that combining both yields superior spatial perception, guiding the design of hybrid, user-aligned assistive systems.

Apurv Varshney, Lucas Nadolskis, Tobias Höllerer, Michael Beyeler arXiv:2504.19345

Stress affects navigation strategies in immersive virtual reality

We used immersive virtual reality to develop a novel behavioral paradigm to examine navigation under dynamically changing, high-stress situations.

Apurv Varshney, Mitchell Munns, Justin Kasowski, Mantong Zhou, Chuanxiuyue He, Scott Grafton, Barry Giesbrecht, Mary Hegarty, Michael Beyeler Scientific Reports

(Note: AV and MM contributed equally to this work.)