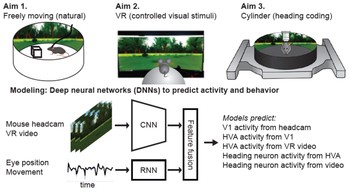

We propose the Mouse vs. AI: Robust Foraging Competition at NeurIPS ‘25, a novel bioinspired visual robustness benchmark to test generalization in reinforcement learning (RL) agents trained to navigate a virtual environment toward a visually cued target.

Topic: Computational Neuroscience

Researchers Interested in This Topic

Luke Herbelin

Research Assistant

Yuchen Hou

PhD Candidate

Karolina Huang

Research Assistant

Ryan Klopfenstein

Research Assistant

Adriano Lima

Lab Volunteer

Jeffrey Liu

Research Assistant

Lucas Nadolskis

PhD Student

Galen Pogoncheff

PhD Candidate

Marius Schneider

Postdoctoral Researcher

Eirini Schoinas

Research Assistant

Hannah L. Stone

PhD Student

Nora Thomas

Honors Student

Research Projects

Mouse vs. AI: A neuroethological benchmark for visual robustness and neural alignment

Marius Schneider, Joe Canzano, Jing Peng, Yuchen Hou, Spencer LaVere Smith, Michael Beyeler arXiv:2509.14446

Predicting Visual Outcomes for Visual Prostheses

What do visual prosthesis users see, and why? Clinical studies have shown that the vision provided by current devices differs substantially from normal sight.

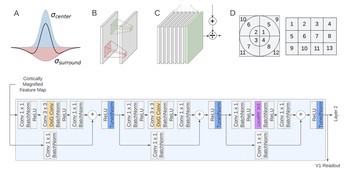

NeuroAI Models of the Visual System

Understanding the visual system in health and disease is a key issue for neuroscience and neuroengineering applications such as visual prostheses.

pulse2percept: A Python-Based Simulation Framework for Bionic Vision

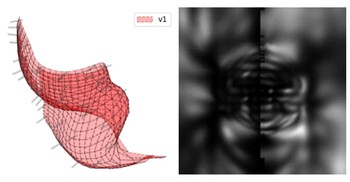

pulse2percept is an open-source Python simulation framework used to predict the perceptual experience of retinal prosthesis patients across a wide range of implant configurations.

Cortical Visual Processing for Navigation

How does the brain extract relevant visual features from the rich, dynamic visual input that typifies active exploration, and how does the neural representation of these features support visual navigation?