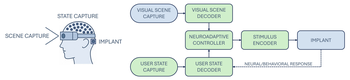

Rather than pursuing a (degraded) imitation of natural sight, bionic vision might be better understood as a form of neuroadaptive XR: a perceptual interface that forgoes visual fidelity in favor of delivering sparse, personalized cues shaped (at its full potential) by user intent, behavioral context, and cognitive state.

Topic: Visual Perception

Researchers Interested in This Topic

Sahiti Doke

Research Assistant

Ethan Fook

Research Assistant

Yuchen Hou

PhD Candidate

Ryan Klopfenstein

Research Assistant

Adriano Lima

Lab Volunteer

Hannah Shea

Research Assistant

Hannah L. Stone

PhD Student

Nora Thomas

Honors Student

Lily M. Turkstra

PhD Candidate

Research Projects

Bionic vision as neuroadaptive XR: Closed-loop perceptual interfaces for neurotechnology

Michael Beyeler 2025 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct)

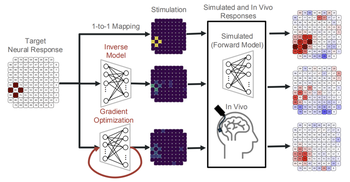

Deep learning-based control of electrically evoked activity in human visual cortex

We developed a data-driven neural control framework for a visual cortical prosthesis in a blind human, showing that deep learning can synthesize efficient, stable stimulation patterns that reliably evoke percepts and outperform conventional calibration methods.

Pehuén Moure, Jacob Granley, Fabrizio Grani, Leili Soo, Antonio Lozano, Rocio López-Peco, Adrián Villamarin-Ortiz, Cristina Soto-Sánchez, Shih-Chii Liu, Michael Beyeler, Eduardo Fernández bioRxiv

(Note: PM, JG, and FG are co-first authors. SL, MB, and EF are co-last authors.)

Simulating Visual Impairment

How are visual acuity and daily activities affected by visual impairment? Previous studies have shown that vision is altered and impaired in the presence of a scotoma, but the extent to which patient-specific factors affect vision and quality of life is not well understood.