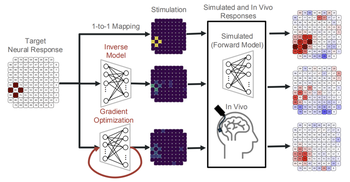

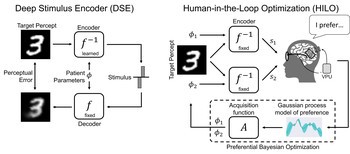

We developed a data-driven neural control framework for a visual cortical prosthesis in a blind human, showing that deep learning can synthesize efficient, stable stimulation patterns that reliably evoke percepts and outperform conventional calibration methods.

Topic: Machine Learning

Researchers Interested in This Topic

Christian Grunt

Research Assistant

Yuchen Hou

PhD Candidate

Diego Linn

Research Assistant

Jack T. Liu

Research Assistant

Jeffrey Liu

Research Assistant

Lucas Nadolskis

PhD Student

Galen Pogoncheff

PhD Candidate

Magnolia Saur

UC LEADS Scholar

Research Projects

Deep learning-based control of electrically evoked activity in human visual cortex

Pehuén Moure, Jacob Granley, Fabrizio Grani, Leili Soo, Antonio Lozano, Rocio López-Peco, Adrián Villamarin-Ortiz, Cristina Soto-Sánchez, Shih-Chii Liu, Michael Beyeler, Eduardo Fernández bioRxiv

(Note: PM, JG, and FG are co-first authors. SL, MB, and EF are co-last authors.)

End-to-End Optimization of Bionic Vision

Rather than predicting perceptual distortions, one needs to solve the inverse problem: What is the best stimulus to generate a desired visual percept?

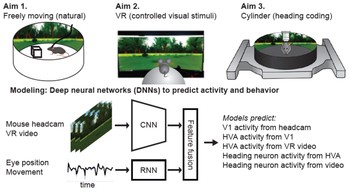

Cortical Visual Processing for Navigation

How does the brain extract relevant visual features from the rich, dynamic visual input that typifies active exploration, and how does the neural representation of these features support visual navigation?