Efficient visual object representation using a biologically plausible spike-latency code and winner-take-all inhibition

Abstract

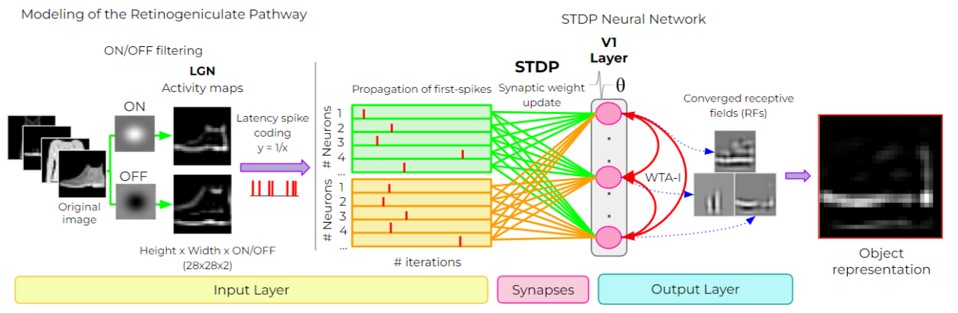

Deep neural networks have surpassed human performance in key visual challenges such as object recognition, but require a large amount of energy, computation, and memory. In contrast, spiking neural networks (SNNs) have the potential to improve both the efficiency and biological plausibility of object recognition systems. Here we present a SNN model that uses spike-latency coding and winner-take-all inhibition (WTA-I) to efficiently represent visual stimuli from the Fashion MNIST dataset. Stimuli were preprocessed with center-surround receptive fields and then fed to a layer of spiking neurons whose synaptic weights were updated using spike-timing-dependent-plasticity (STDP). We investigate how the quality of the represented objects changes under different WTA-I schemes and demonstrate that a network of 150 spiking neurons can efficiently represent objects with as little as 40 spikes. Studying how core object recognition may be implemented using biologically plausible learning rules in SNNs may not only further our understanding of the brain, but also lead to novel and efficient artificial vision systems.

#CVPR workshop talk by Asst. Prof. Michael Beyeler (@ProfBeyeler, @bionicvisionlab) now available on YouTube, highlighting his recent work on retinal implants and neuro-inspired AI.

— UCSB ComputerScience (@ucsbcs) September 11, 2022

Workshop: What can computer vision learn from visual neuroscience? https://t.co/5K7sxkQsfB https://t.co/WHL4jO793m