A computational model of phosphene appearance for epiretinal prostheses

Abstract

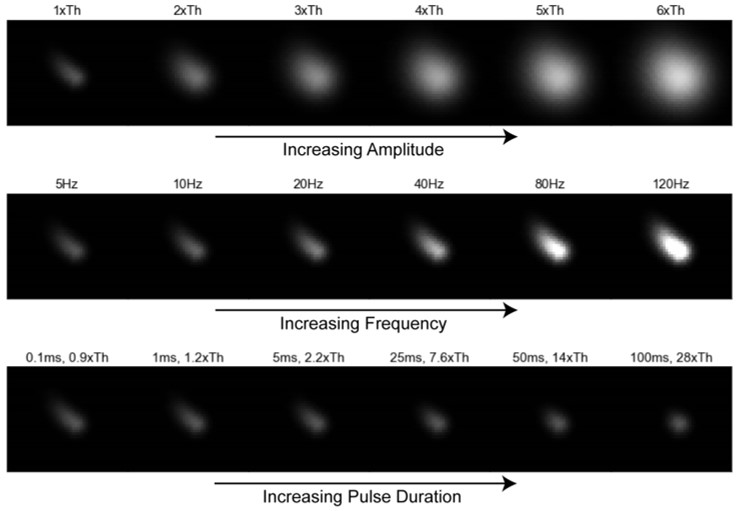

Retinal neuroprostheses are the only FDA-approved treatment option for blinding degenerative diseases. A major outstanding challenge is to develop a computational model that can accurately predict the elicited visual percepts (phosphenes) across a wide range of electrical stimuli. Here we present a phenomenological model that predicts phosphene appearance as a function of stimulus amplitude, frequency, and pulse duration. The model uses a simulated map of nerve fiber bundles in the retina to produce phosphenes with accurate brightness, size, orientation, and elongation. We validate the model on psychophysical data from two independent studies, showing that it generalizes well to new data, even with different stimuli and on different electrodes. Whereas previous models focused on either spatial or temporal aspects of the elicited phosphenes in isolation, we describe a more comprehensive approach that is able to account for many reported visual effects. The model is designed to be flexible and extensible, and can be fit to data from a specific user. Overall this work is an important first step towards predicting visual outcomes in retinal prosthesis users across a wide range of stimuli.

A computational model of phosphene appearance for epiretinal prostheses https://t.co/gwiByiJpFc by @jacob_granley and @ProfBeyeler

— The vOICe vision 🦣 (@seeingwithsound) August 13, 2021