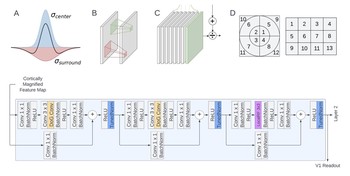

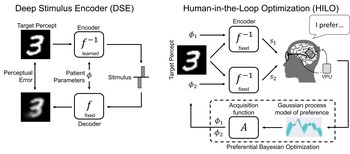

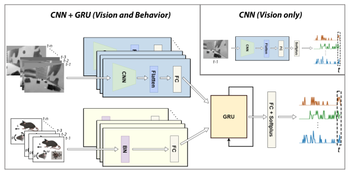

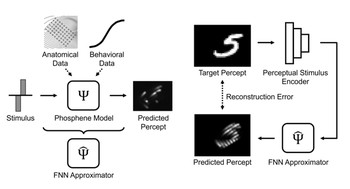

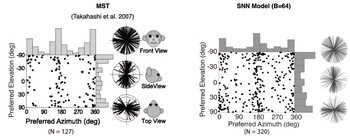

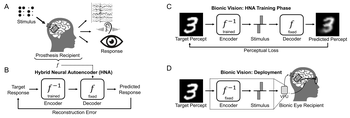

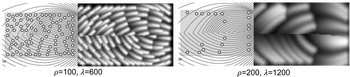

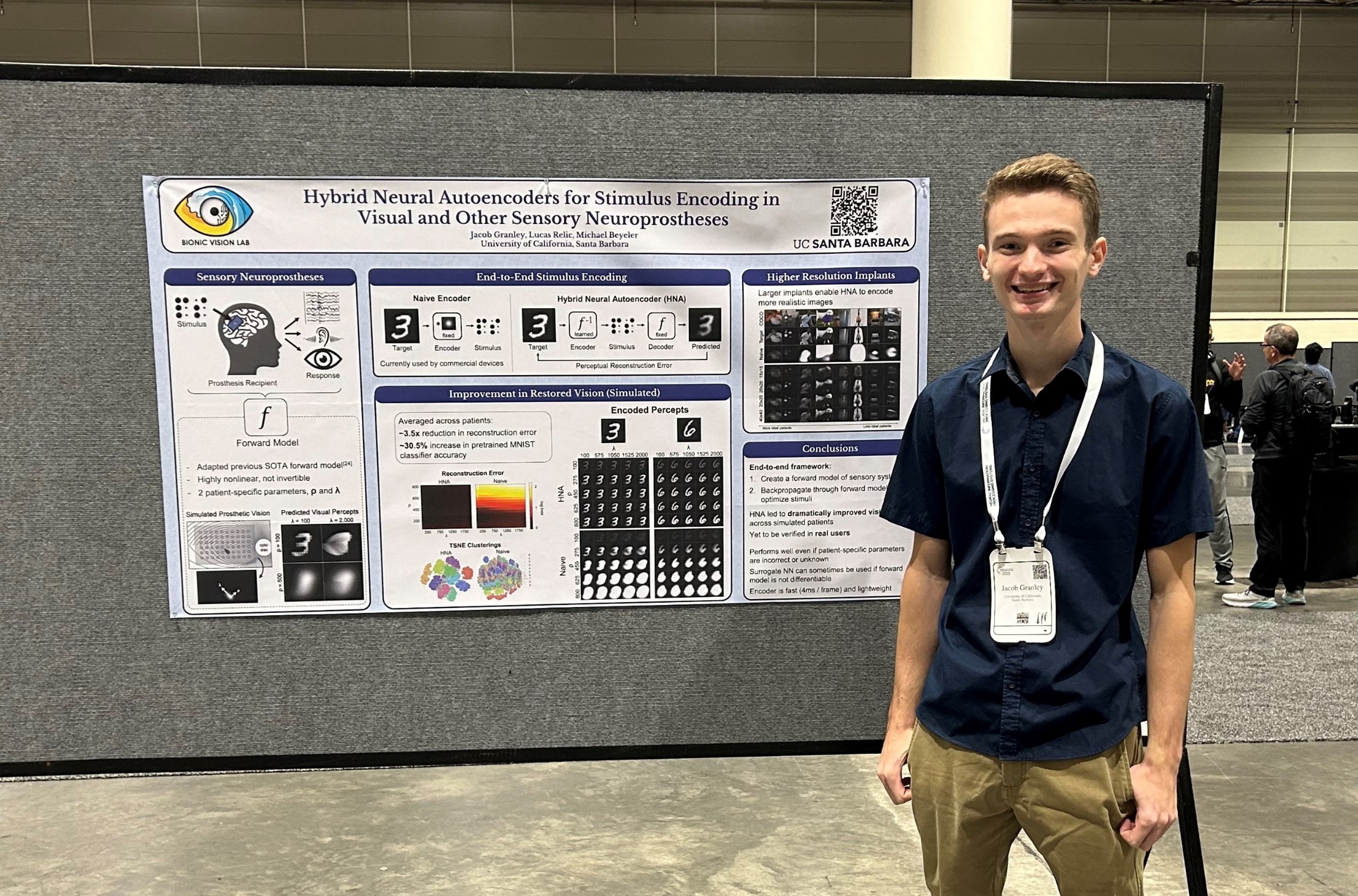

We present a series of analyses on the shared representations between evoked neural activity in the primary visual cortex of a blind human with an intracortical visual prosthesis, and latent visual representations computed in deep neural networks.

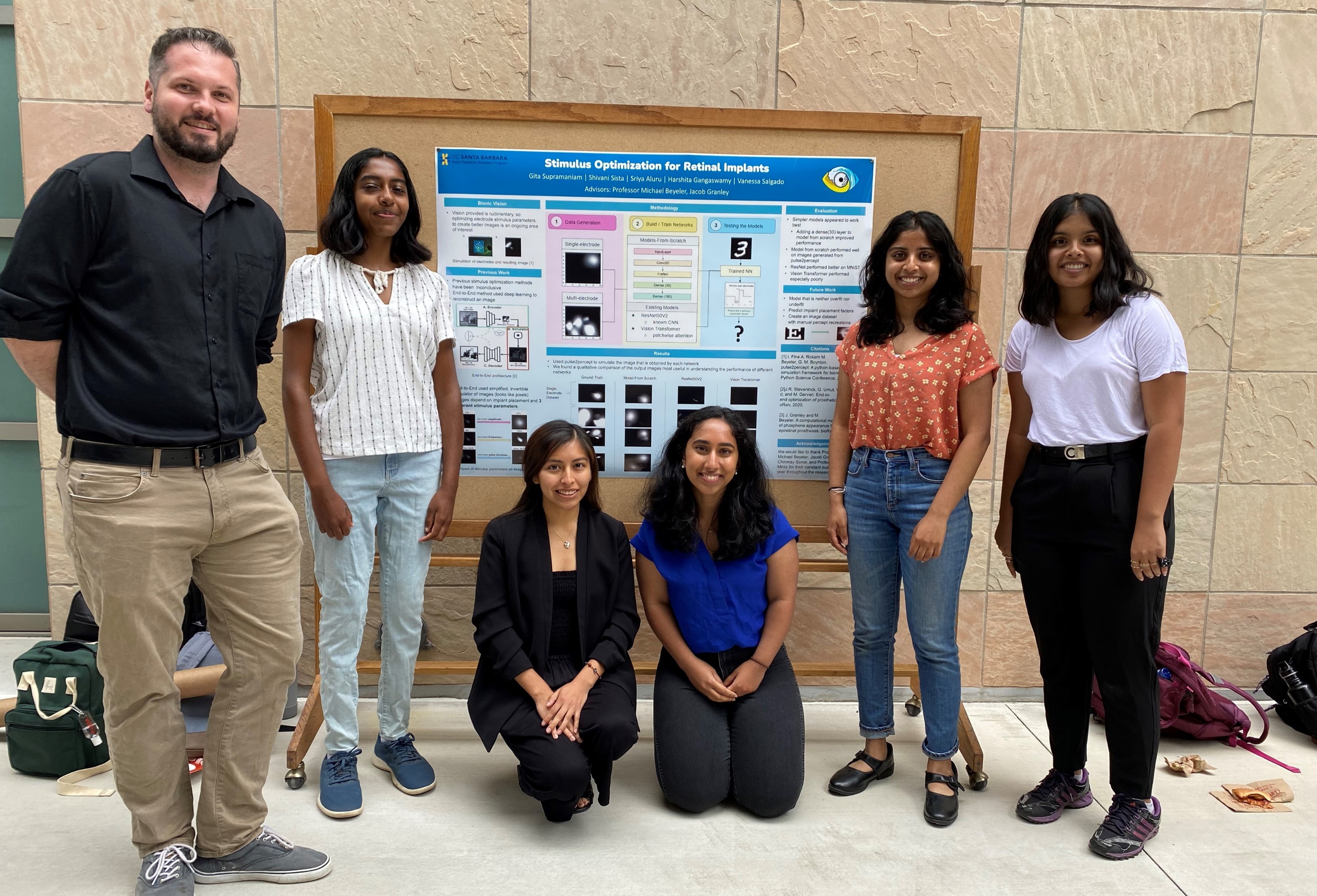

Who We Are

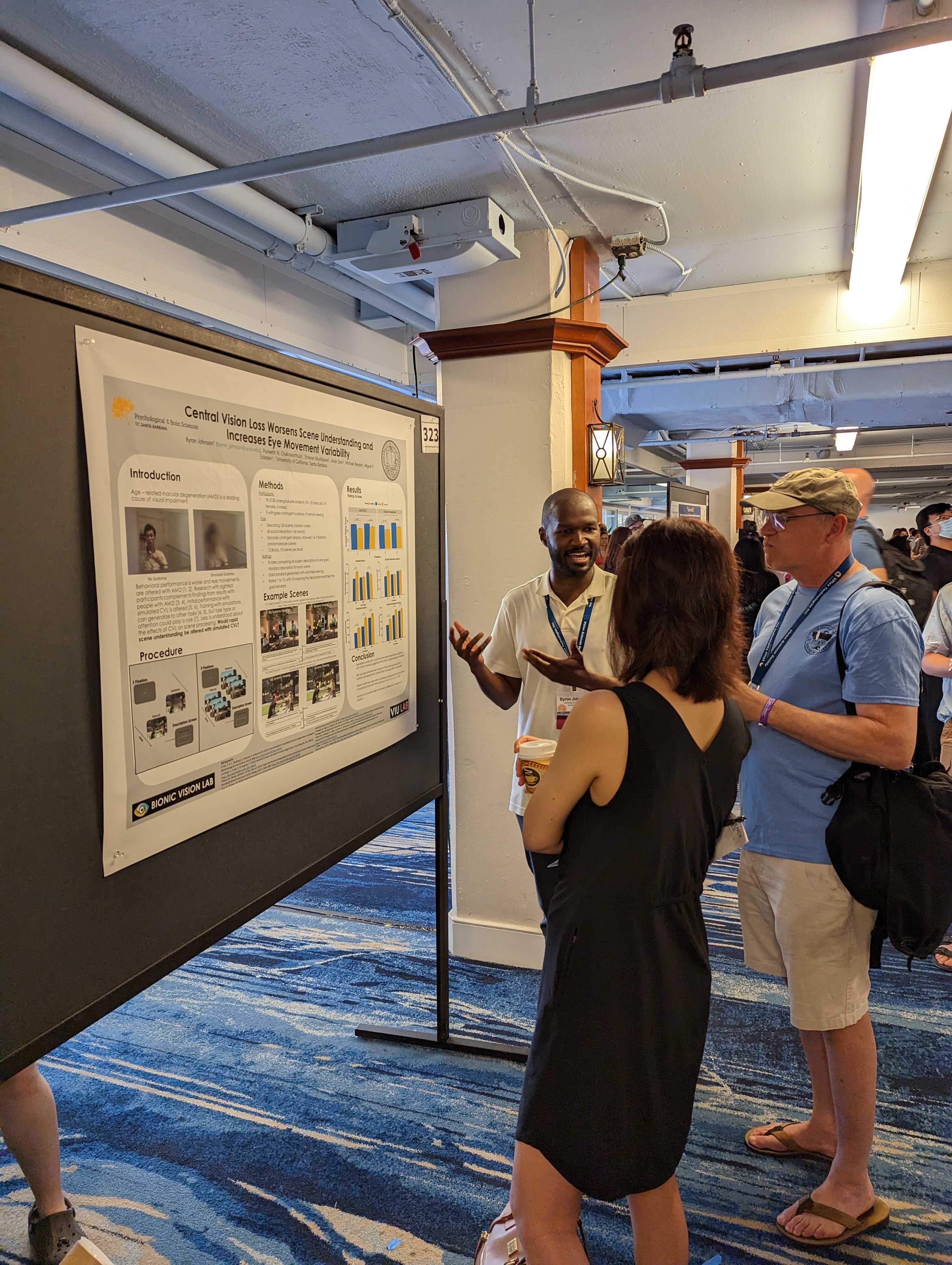

We are an interdisciplinary group interested in exploring the mysteries of human, animal, and artificial vision. Our passion lies in unraveling the science behind bionic technologies that may one day restore useful vision to people living with incurable blindness.

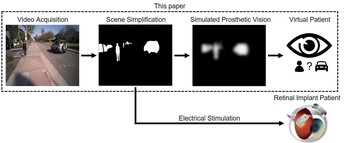

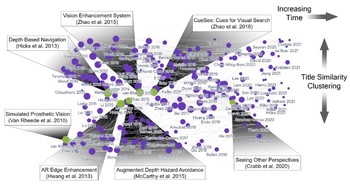

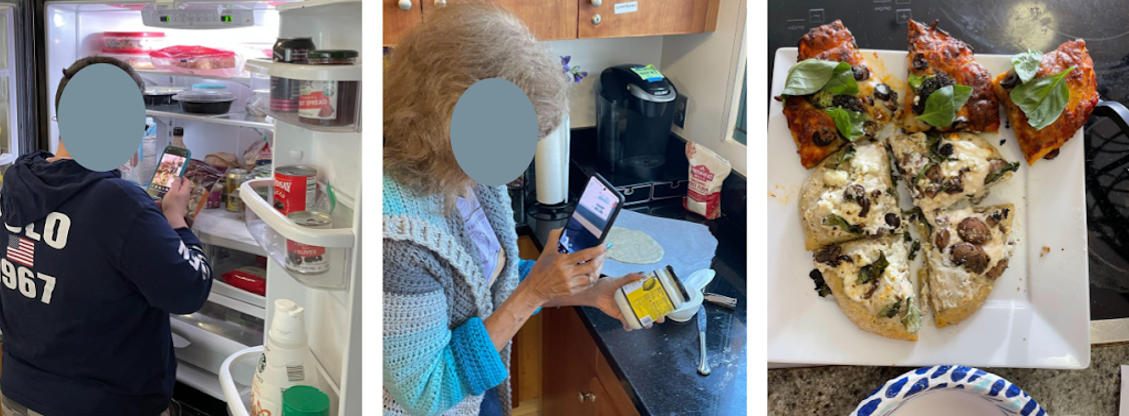

At the heart of our lab is a diverse team that integrates computer science and engineering with neuroscience and psychology. What unites us is a shared fascination with the intricacies of vision and its potential public health applications. However, we are not just about algorithms and data; our research projects range from trying to understand perception in individuals with visual impairments to crafting biophysical models of brain activity and engaging in the transformative world of virtual and augmented reality to create novel visual accessibility tools.

What sets our lab apart is our connection to the community of implant developers and bionic eye recipients. We don't just theorize; we are committed to transforming our ideas into practical solutions that are rigorously tested across different bionic eye technologies. Our goal is to enhance not just scientific understanding, but to foster a greater sense of independence in the lives of those with visual impairments.